Updating my homelab monitoring stack

When I started building my homelab I deployed a very rudimentary monitoring stack which consisted mostly of Uptime Kuma and Beszel. Eventually I opted for a more powerful stack and replaced Beszel with Grafana for dashboard visualization, Prometheus as time series database (TSDB) for metrics and various exporters to retrieve metrics like Node Exporter or Cadvisor.

However lately i felt the urge to improve upon that. Until now I had shied away from making any modifications and I just did not have the mental capacity to dive deep into a new topic. There is also always the question whether I need something sophisticated and professional like Grafana, Prometheus, etc. in my small personal homelab. Ultimately I decided it would be fun to have the possibility to learn something new. So when last week I read an article about Alloy I decided to adopt this for my homelab as well. With Alloy I can replace most of the running exporters to collect metrics. It comes bundled with a variety of integrations, for example Node Exporter and Cadvisor are already included. Something I want to look at in the future is to replace the PVE Exporter I have for my Proxmox host with the OpenTelemetry collector as an integration exists as of PVE 9. I went ahead and replaced all exporters with alloy which went mostly very smooth thanks to the great examples in the alloy-scenarios repository. I did this across 6 different machines. Since I was already working on the stack I decided to go even further:

-

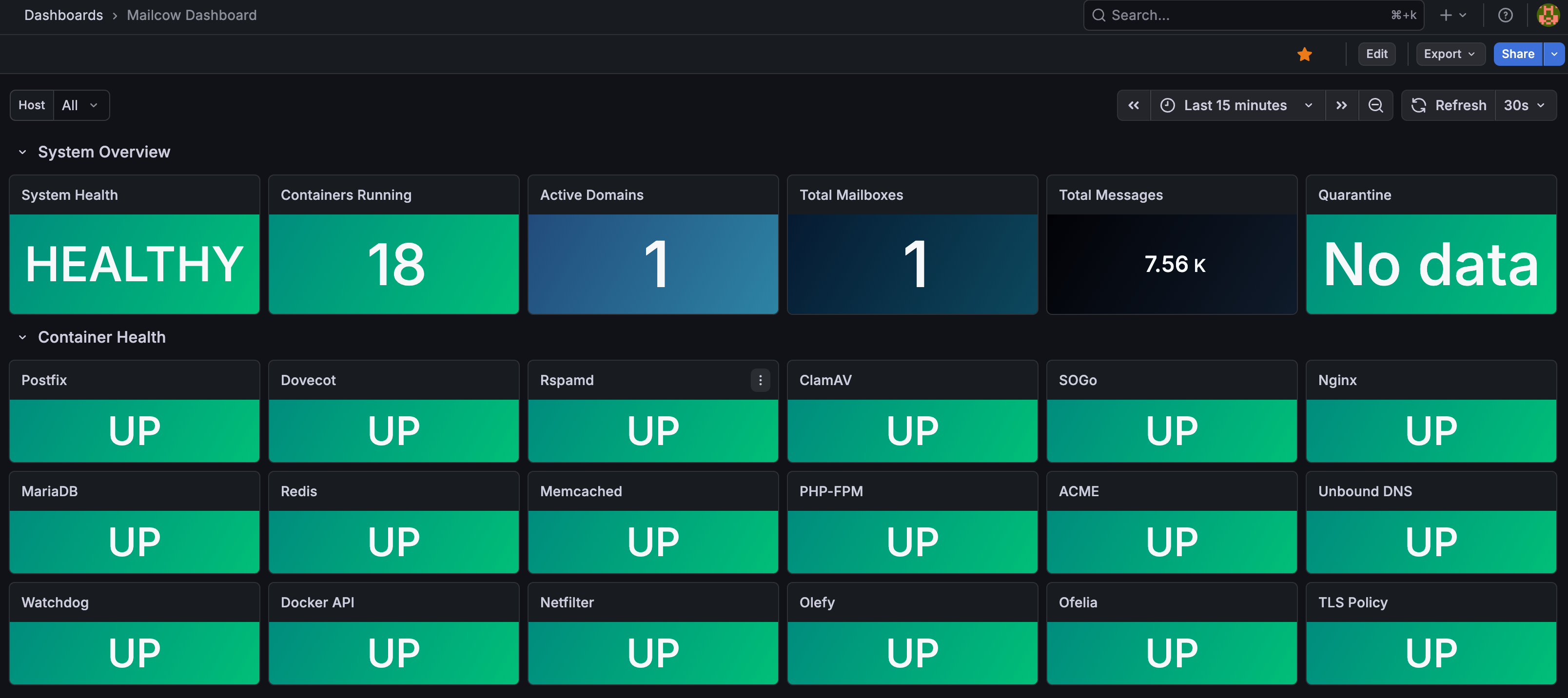

I added a mailcow exporter for my mail server to have better visibility about whats going on without logging into the admin interface

-

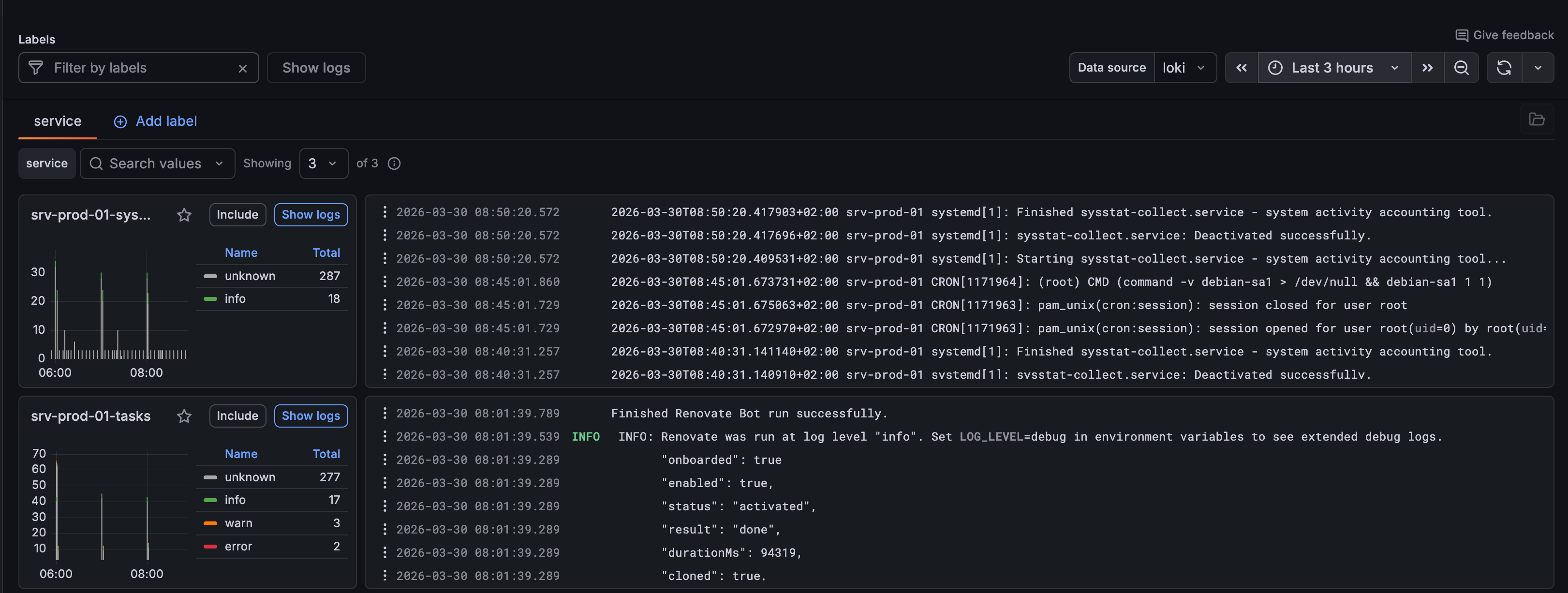

I decided to add Loki as a database for logs as collecting logs is pretty easy with alloy as well and with the latest changes everything was in place already

Since configuring loki and alloy correctly is a bit involved here is a quick rundown of the configuration I currently deployed:

Loki Configuration:

---

auth_enabled: false

server:

http_listen_port: 3100

common:

instance_addr: 127.0.0.1

path_prefix: /loki

storage:

filesystem:

chunks_directory: /loki/chunks

rules_directory: /loki/rules

replication_factor: 1

ring:

kvstore:

store: inmemory

schema_config:

configs:

- from: 2020-10-24

store: tsdb

object_store: filesystem

schema: v13

index:

prefix: index_

period: 24h

limits_config:

retention_period: "7d"

ingestion_rate_mb: 4

ingestion_burst_size_mb: 6

max_streams_per_user: 10000

max_line_size: 256000

Alloy Metrics (metrics.alloy):

// Collectors

prometheus.exporter.unix "integrations_node_exporter" {

disable_collectors = ["ipvs", "btrfs", "infiniband", "xfs", "zfs"]

enable_collectors = ["meminfo"]

filesystem {

fs_types_exclude = "^(autofs|binfmt_misc|bpf|cgroup2?|configfs|debugfs|devpts|devtmpfs|tmpfs|fusectl|hugetlbfs|iso9660|mqueue|nsfs|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|selinuxfs|squashfs|sysfs|tracefs)$"

mount_points_exclude = "^/(dev|proc|run/credentials/.+|sys|var/lib/docker/.+)($|/)"

mount_timeout = "5s"

}

netclass {

ignored_devices = "^(veth.*|cali.*|[a-f0-9]{15})$"

}

netdev {

device_exclude = "^(veth.*|cali.*|[a-f0-9]{15})$"

}

}

prometheus.exporter.cadvisor "integrations_cadvisor" {

docker_only = true

}

// Relabeling

discovery.relabel "integrations_node_exporter" {

targets = prometheus.exporter.unix.integrations_node_exporter.targets

rule {

target_label = "instance"

replacement = constants.hostname

}

rule {

target_label = "job"

replacement = "integrations/node_exporter"

}

}

discovery.relabel "integrations_cadvisor" {

targets = prometheus.exporter.cadvisor.integrations_cadvisor.targets

rule {

target_label = "job"

replacement = "integrations/docker"

}

rule {

target_label = "instance"

replacement = constants.hostname

}

}

// Scrapers

prometheus.scrape "integrations_node_exporter" {

scrape_interval = "30s"

targets = discovery.relabel.integrations_node_exporter.output

forward_to = [prometheus.remote_write.local.receiver]

}

prometheus.scrape "integrations_cadvisor" {

scrape_interval = "30s"

targets = discovery.relabel.integrations_cadvisor.output

forward_to = [ prometheus.remote_write.local.receiver ]

}

// Targets

prometheus.remote_write "local" {

endpoint {

url = "http://prometheus:9090/api/v1/write"

}

}

Alloy Logs (logs.alloy):

loki.source.journal "logs_integrations_integrations_node_exporter_journal_scrape" {

max_age = "24h0m0s"

relabel_rules = discovery.relabel.logs_integrations_integrations_node_exporter_journal_scrape.rules

forward_to = [loki.write.local.receiver]

path = "/var/log/journal"

labels = {component = string.format("%s-journal", constants.hostname)}

}

local.file_match "logs_integrations_integrations_node_exporter_direct_scrape" {

path_targets = [

{

__address__ = "localhost",

__path__ = "/var/log/{syslog,messages,*.log}",

instance = constants.hostname,

job = string.format("%s-system", constants.hostname),

},

{

__address__ = "localhost",

__path__ = "/var/log/tasks/**/*.log",

instance = constants.hostname,

job = string.format("%s-tasks", constants.hostname),

log_type = "tasks",

},

{

__address__ = "localhost",

__path__ = "/var/log/traefik/**/*.log",

instance = constants.hostname,

job = string.format("%s-traefik", constants.hostname),

log_type = "traefik",

},

]

}

discovery.relabel "logs_integrations_integrations_node_exporter_journal_scrape" {

targets = []

rule {

source_labels = ["__journal__systemd_unit"]

target_label = "unit"

}

rule {

source_labels = ["__journal__boot_id"]

target_label = "boot_id"

}

rule {

source_labels = ["__journal__hostname"]

target_label = "instance"

}

rule {

source_labels = ["__journal__machine_id"]

target_label = "machine_id"

}

rule {

source_labels = ["__journal__transport"]

target_label = "transport"

}

rule {

source_labels = ["__journal_priority_keyword"]

target_label = "level"

}

}

loki.source.file "logs_integrations_integrations_node_exporter_direct_scrape" {

targets = local.file_match.logs_integrations_integrations_node_exporter_direct_scrape.targets

forward_to = [loki.write.local.receiver]

}

loki.write "local" {

endpoint {

url ="http://loki:3100/loki/api/v1/push"

}

}

Alloy docker compose configuration:

---

services:

alloy:

image: grafana/alloy:v1.14.2@sha256:eadfe35ea52b26cbec4d4d780fbcc31edb31108c1c9e537ca59557a5a102c712

hostname: srv-prod-01

container_name: alloy

restart: unless-stopped

volumes:

- /path/to/alloy/configs/directory:/etc/alloy/config

- "/:/rootfs:ro"

- /var/run:/var/run:ro

- "/sys:/sys:ro"

- /var/lib/docker/:/var/lib/docker:ro

- /dev/disk/:/dev/disk:ro

- /var/log:/var/log

- /run/log/journal:/run/log/journal:ro

- /etc/machine-id:/etc/machine-id:ro

env_file:

- .env

networks:

- socket-network

command: run --server.http.listen-addr=0.0.0.0:12345 --storage.path=/var/lib/alloy/data /etc/alloy/config

networks:

socket-network:

external: true

Again the alloy-scenarios repository was of tremendous help coming up with the right configuration. Also you might noticed I split the alloy configuration for collecting metrics and logs. It is possible to pass a directory instead of a single config file to alloy and it will pick up all files with the .alloy file extension automatically. I think this makes it way easier to modify and read the various config files. When everything is setup correctly you can start visualizing the data in your Grafana instance:

Not only am I know able to look at my various dashboards and get a quick overview of the overall health of my systems, I can also debug into into further in case of a problem without the need to grep log files on my different hosts. I can also improve my dashboards with aggregated log metrics for example to visualize failed SSH attempts, grepping the access log of my Traefik reverse proxy, etc.

One thing still on my to do list is to look at the Grafana Alert Manager integration. I already have alerting setup in my homelab through ntfy and mailrise but now with logs being available in Grafana I might be able to fetch even more valuable data from it and be immediately notified in case of emergency.

Currently I store logs for 7 days and will monitor the disk usage the upcoming days to get a sense how much data I am producing now and whether its worth it to increase or decrease this retention limit. In conclusion I am pretty happy overall with how things currently stand and whenever the need comes up I will have all the required information readily at hand. On the way I learned a bunch of new things and I count this as the biggest win of it all.

Posted in homelab